When AI Tools Get Standing Access: Lessons from the Vercel Breach

Vercel was the latest organization to come face-to-face with an OAuth attack in February 2026. The specifics of the Vercel breach will continue to unfold, but the pattern is already familiar. A third-party AI tool with persistent OAuth access becomes the entry point. From there, the attacker moves laterally through connected accounts and into environments where credentials are available. It's not a novel technique. It's the predictable result of how most organizations provision access for AI tooling today.

I want to be clear about something: this isn't a Vercel story. This is an everywhere story.

The access model is broken by design

Most organizations today are giving AI tools standing access to the same resources their employees use — and overlooking the same security controls. The OAuth grant is always live. The environments are always reachable. The credentials are always there. That's not a vulnerability in the traditional sense, it’s just how most organizations are built.

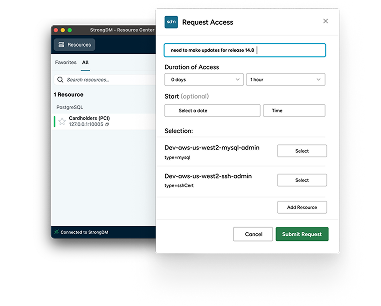

The fix is the same principle that's been reshaping human access governance: Just-in-Time. JIT means the OAuth grant only exists during an active, approved session. Access to production environments is time-bound and requires explicit policy evaluation. Even if an attacker compromises a tool in that chain, the window of exploitability shrinks from months to minutes.

And for AI tooling specifically, JIT gets even more powerful. As organizations adopt MCP-connected agents — tools like Claude, Codex, and Copilot that call external services through standardized protocols — JIT becomes enforceable at the tool level, not just the account level. Instead of an AI agent inheriting whatever access its connected identity has, each tool invocation can be individually evaluated, scoped, and time-limited.

It's not enough to govern who can use an AI tool. You need to govern what that tool can do, when it can do it, and for how long.

The identity no one is governing

Here's the part that should make every security team pause. In the Vercel breach, the compromised tool was operating as a non-human identity with OAuth access to a human's Google Workspace — an NHI with inherited human privileges.

Every AI tool, every MCP server, every agent integration is a non-human identity. They authenticate via tokens. They access resources. They can be compromised. But in most organizations, they're provisioned quickly, given broad access, and almost never audited with the same rigor as human accounts. The industry has been warning about this gap as AI agent adoption accelerates. The governance still hasn't caught up.

Classification isn't a solution

After a breach, the conversation often turns to secrets classification — did the right credentials get tagged as sensitive? But asking humans to correctly identify, tag, and classify every secret in every environment without error is a tall order.

The alternative is a credential injection model: secrets are injected by the gateway at connection time, used for the session, and never persist in the environment. There's nothing to exfiltrate because the credential never lands anywhere an attacker can reach. Whether something is marked sensitive becomes irrelevant because the credential was never sitting there to begin with.

What this means for your organization

AI tooling is accelerating. The access models around it need to accelerate too. The organizations that treat every AI integration as a real identity — with real governance, real audit trails, and real time-bound access — will be the ones that don't end up in the next breach headline.

The ones that don't? They're already building the next case study.

Next Steps

StrongDM unifies access management across databases, servers, clusters, and more—for IT, security, and DevOps teams.

- Learn how StrongDM works

- Book a personalized demo

- Watch a StrongDM walkthrough

Categories:

About the Author

John Martinez, Technical Evangelist, has had a long 30+ year career in systems engineering and architecture, but has spent the last 13+ years working on the Cloud, and specifically, Cloud Security. He's currently the Technical Evangelist at StrongDM, taking the message of Zero Trust Privileged Access Management (PAM) to the world. As a practitioner, he architected and created cloud automation, DevOps, and security and compliance solutions at Netflix and Adobe. He worked closely with customers at Evident.io, where he was telling the world about how cloud security should be done at conferences, meetups and customer sessions. Before coming to StrongDM, he lead an innovations and solutions team at Palo Alto Networks, working across many of the company's security products.

You May Also Like